Just finished Regression Analysis at Georgia Tech and off to do some Bayesian Statistics. I am now on the last 3 courses before I finish my degree. Hopefully, I’ll be done by the end

of 2022 fingers crossed. Currently, I’ve taken the following courses:

Courses Taken:

- Introduction to Analytic Modeling – high level theory of statistical modeling plus some R

- Introduction to Business for Analytics – survey of 4-5 business units and how they function

- Computing for Data Analytics – python plus numpy and pandas with some from scratch machine learning implementation

- Data Analytics in Business – high-level overview of regression, marketing, supply chain and investing using some lightweight stats.

- Data and Visual Analytics – One high-level project that results in a Machine Learning or BI end-to-end solution plus a series of mini tutorials covering: Python graph from a movie API, Spark, SQL, Random Forest from scratch implementation and basic machine learning libraries in Python.

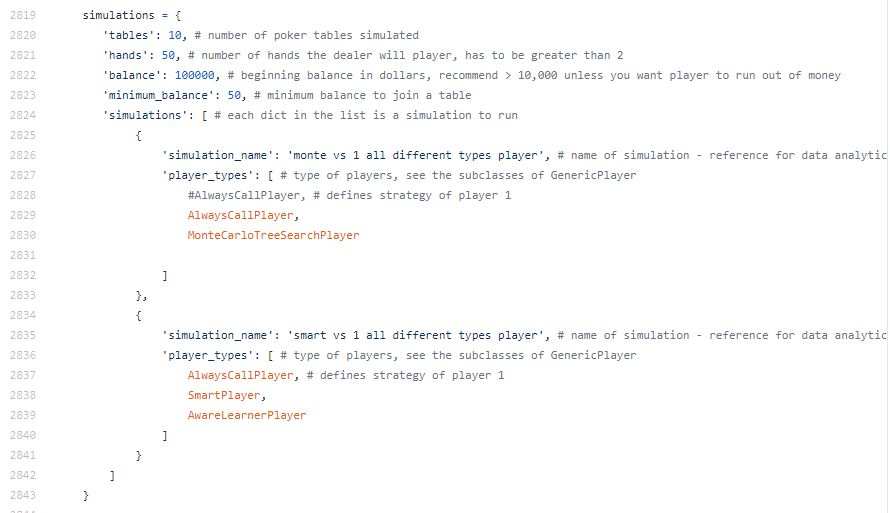

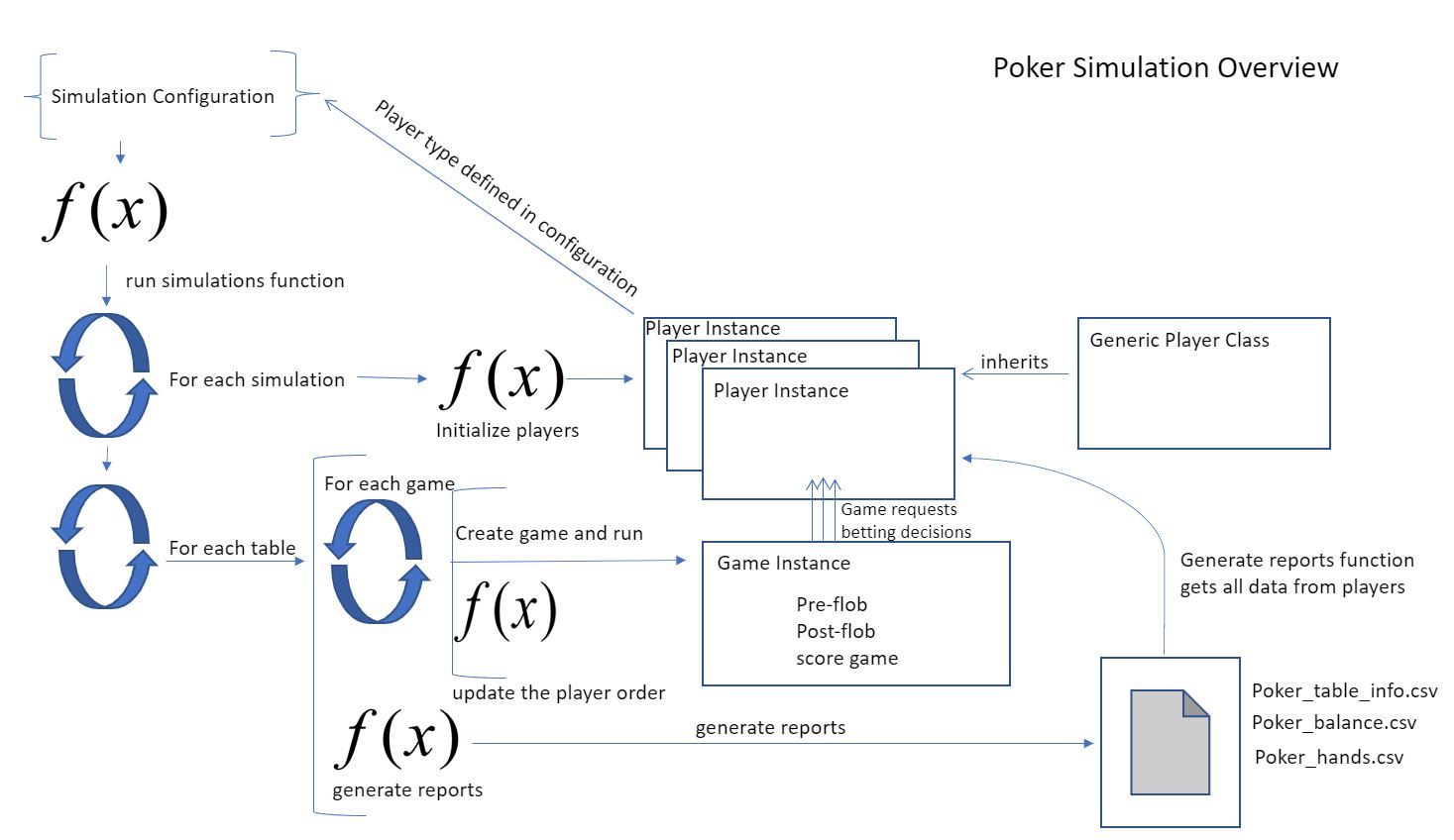

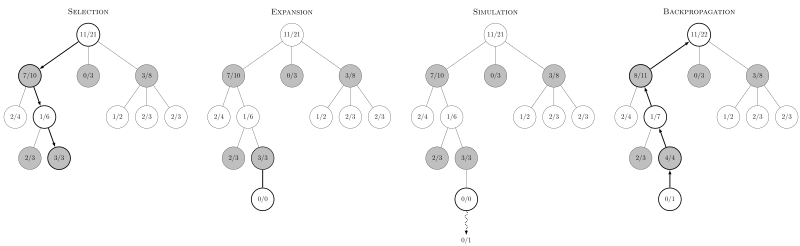

- Simulation – Lots of theory on simulation, statistics, probability, calculus and simulations plus 2 small projects where you implement some simulation oriented concept dubbed mini-projects.

- Regression – Everything is in the R language covers linear regression, general linearized models and dips into more advanced concepts. Lectures derive everything from scratch, which makes this easily the best regression course I’ve taken. I’ve taken a few that cover that topic.

Courses Left:

My last 3 courses will be:

- Bayesian Statistics – This is supposed to cover similar topics to regression, but involve the BUGS program and using the Bayesian statistics paradigm. Sounds fun, though by no means am I an expert on that topic.

- Computational Data Analytics – This is supposed to be Computing for Data Analysis like course, but significantly harder. There are no outlines and clues. They just give you an algorithm and you have to implement it from scratch in Python. Sounds like my type of course.

- High Dimensional Data Analytics – This is

- supposed to be a significantly harder course than Computational Data Analytics, which is already one of the harder courses. This looks into situations where you have little data compared to the number of features present. Supposedly, this course involves reading a bunch of research papers. Sounds fun.

Plus I need to complete a practicum, which seems to be some kind of internship.

Practicum – Internship of Sorts

I’m not 100% sure what the practicum is about. They have a few companies that I guess pitch a project for a semester and students work on it. The fabulous Laurent, someone I met on Slack that seems quiet bright, mentioned that most of the projects are BI related. Some are Machine Learning related. I’m thinking of aiming for a Machine Learning heavy project as coding is one of my favorite activities and that’s why I’m taking the program in any case. I might team up with Laurent and possibly see if I can get Shahin in from Simulation and DVA class.

Thoughts so Far

I think overall the program is good. The intro courses are good if you don’t have exposure to analytics. If you do, then you’ll find the electives far more interesting. They get relatively deep compared to the introduction courses. There is a good chance that I will take a few extra courses afterwards. I think: AI, Deep Learning and Reinforcement Learning looked interesting.

Overall, I like this program and think it’s been fun. I’ve kept it kind of on the light side. Taking only 1-2 courses a semester. It hasn’t been unreasonable, but I’ll admit I’ve had moments where I’ve been a bit burned out or stressed. Last term being an example of that with a total of 8 exams during a 1.5 month period.

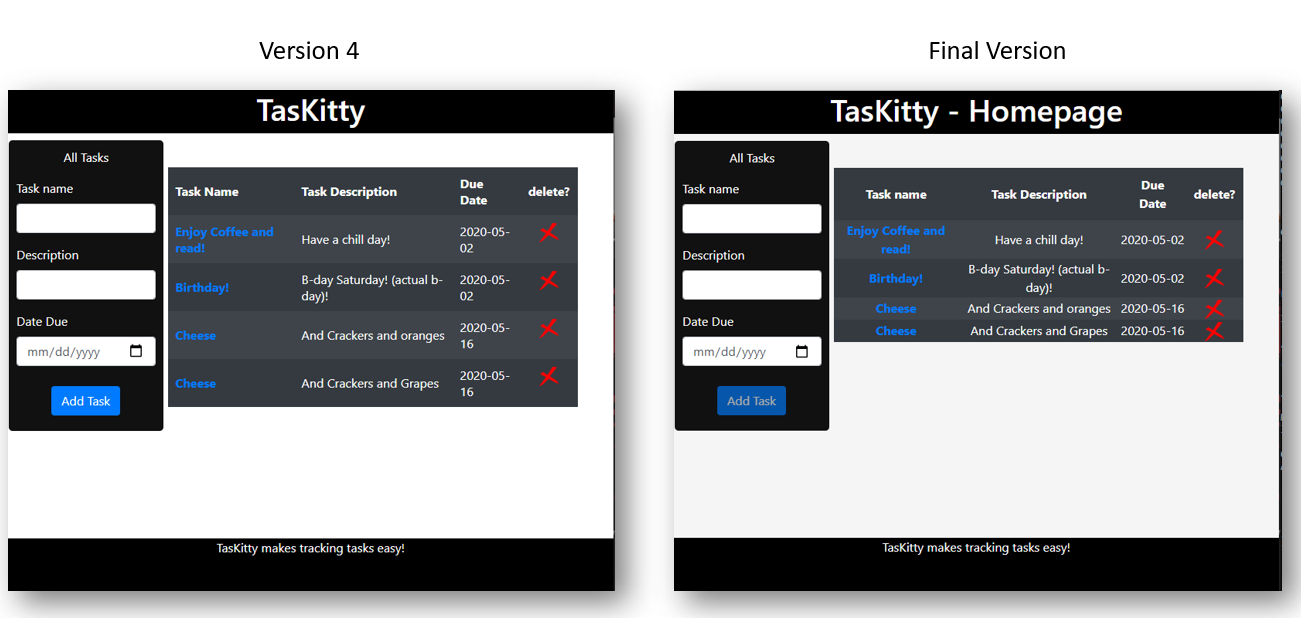

Afterwards, I feel like I’ll most likely take some computer science classes. Self-learning is also important, but it’s nice not having to research and find good material. Have something taught to you without having too many misconceptions or missing some big picture items. Only con so far, not enough hands on experience. I miss the whole creating prototypes thing. That’s half the fun in tech.

Publication

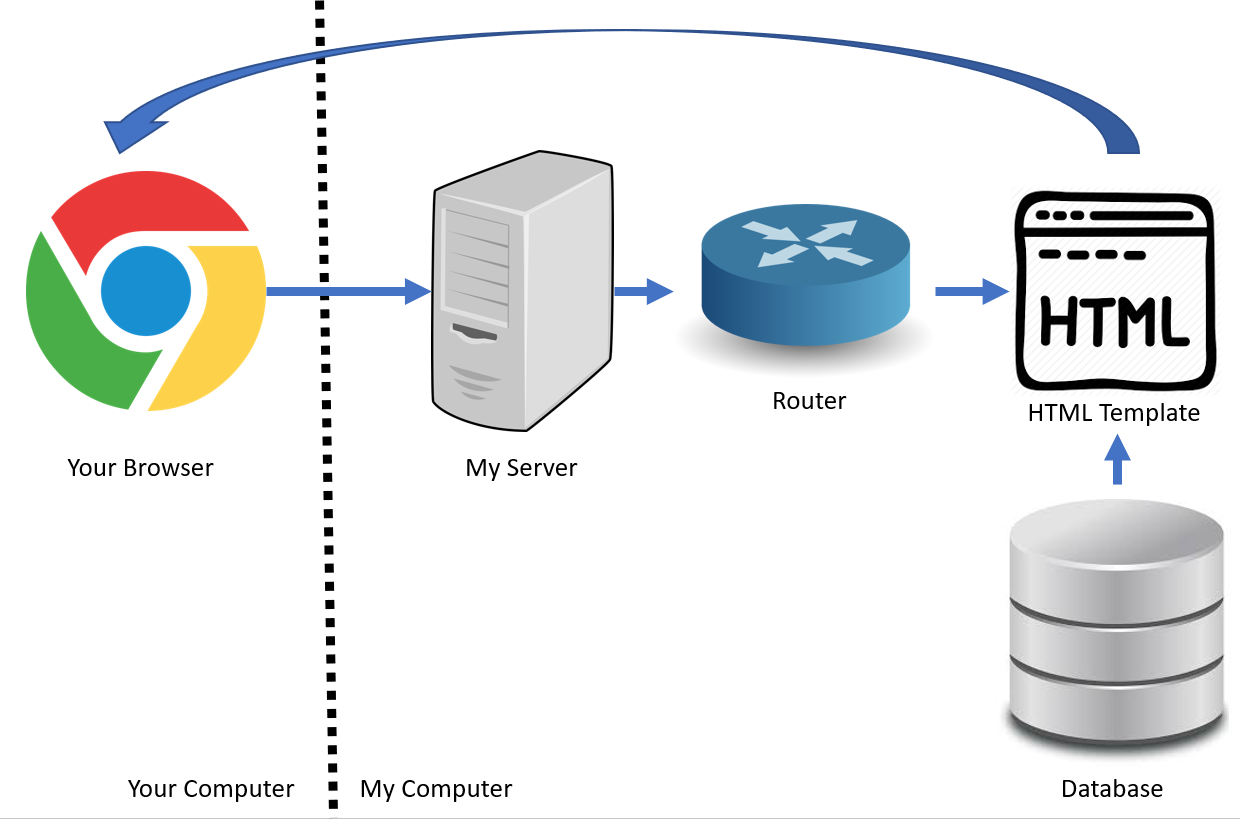

I submitted a publication for review late June. Still waiting to hear about it. It involves image-to-image comparison algorithm and some fancy work I did with Flask and PWA libraries. Met some great people during the project and might look at doing a second publication with them. I’ve mostly been focusing on Computer Vision applications. Took the Georgia Tech Computer Vision curriculum and found the books. Probably will read that in preparation when I have some down time. One nice thing about working on publication is you get to really play around with ideas and prototypes. That’s been a ton of fun for me thus far.